Researchers at Meta AI have made a significant advancement in speech generative AI. We created Voicebox, the first model that can perform at a cutting-edge level across speech-generation tasks that it was not exceptionally trained for.

Voicebox generates outputs in a wide range of styles, and it can both start from scratch and alter a sample that is given, just like generative systems for graphics and text. Yet Voicebox creates high-quality audio samples rather than a picture or a passage of text. The model is capable of noise reduction, content editing, style conversion, and different sample production in addition to speech synthesizing across six languages.

Before Voicebox, generative AI for speech needed to be trained specifically for each task using carefully crafted training data. Voicebox employs a novel technique to learn solely from untranslated audio. Voicebox can alter any portion of a sample, not simply the conclusion of an audio clip it is given, in contrast to autoregressive models for audio production.

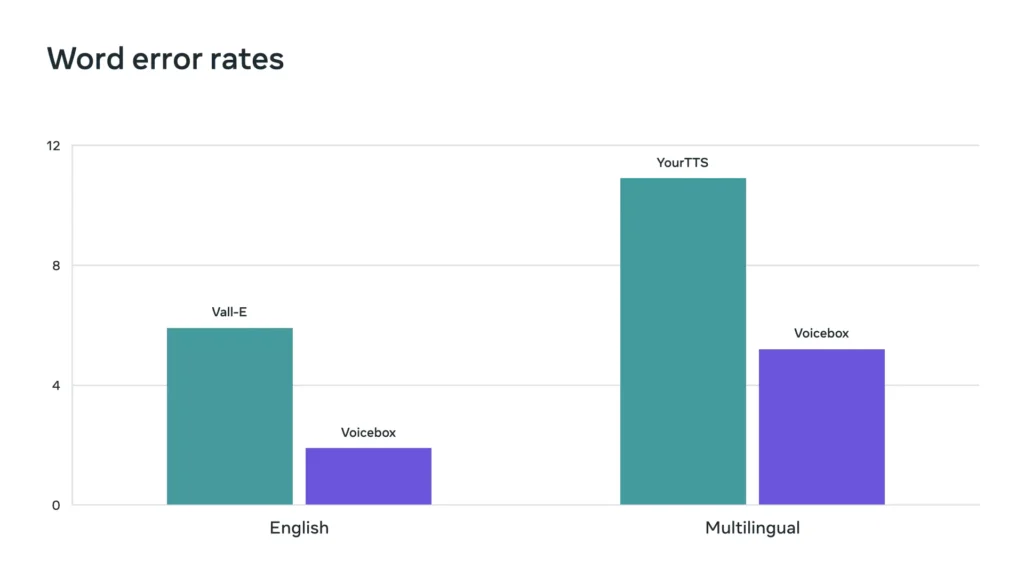

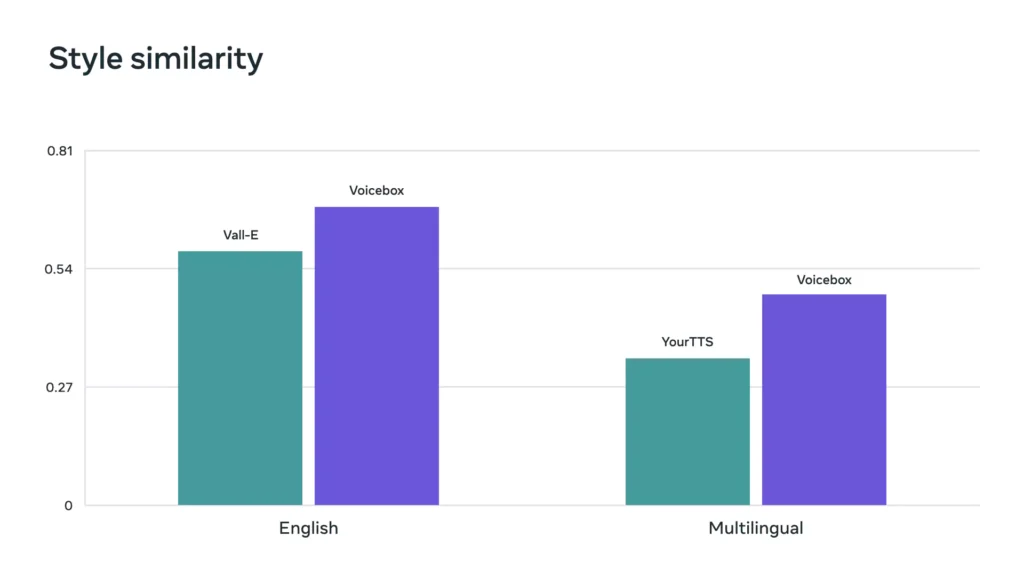

The foundation of Voicebox is Flow Matching, which has been demonstrated to outperform diffusion models. Voicebox performs better on zero-shot text-to-speech than the most advanced English model VALL-E in terms of both intelligibility (5.9% vs. 1.9% word error rates) and audio similarity (0.580 vs. 0.681), while being up to 20 times faster. Voicebox surpasses YourTTS for cross-lingual style transfer, lowering the average word error rate from 10.9% to 5.2% and increasing audio similarity from 0.335 to 0.481.

a number of exciting applications for generative speech models, we are not currently making the Voicebox model or code available to the public because of security concerns. In order to advance the state of the art in AI, we feel it is critical to be open with the AI community and share our findings, but it’s also crucial to strike the correct balance between openness and responsibility. In light of these factors, we are releasing audio samples and a study paper that describe our methodology and the outcomes we got. In the study, we also explain how we created a powerful classifier that can differentiate between real speech and audio produced by Voicebox.

A new technique for generating speech

The fact that existing speech synthesizers can only be trained on data created specifically for that purpose is one of their key drawbacks. These difficult-to-create inputs, also known as monotonic, clean data, give rise to monotonous outputs since they are hard to manufacture in large enough quantities.

The Flow Matching model, which is Meta’s most recent development in non-autoregressive generative models that can learn extremely non-deterministic mapping between text and speech, is the foundation upon which we constructed Voicebox. Voicebox can learn from a variety of speech input without needing to precisely classify those changes, thanks to non-deterministic mapping. Voicebox can now train on a far wider range of data thanks to this.

More than 50,000 hours of recorded voice and transcripts from free audiobooks in English, French, Spanish, German, Polish, and Portuguese were used to train Voicebox. When given the surrounding speech and the segment’s text, Voicebox can be trained to predict a speech segment. After mastering the skill of context-based speech infilling, the model can use it to generate speech for a variety of tasks, including inserting segments into audio recordings without having to start from scratch.

Because to its adaptability, Voicebox excels at a number of activities, including:

In-context text-to-speech synthesis: Voicebox can generate text-to-speech from a two-second input audio sample by matching the sample’s acoustic style. Future works might expand on this potential by enabling speech for the deaf or by letting users alter the voices of non-player characters and virtual assistants.

Cross-lingual style transfer: Voicebox can construct a reading of the text in a given language using a sample of speech and a passage of text in that language (English, French, German, Spanish, Polish, or Portuguese). This capacity is intriguing because it has the potential to support true, natural communication between individuals, even when they don’t share a common language.

Speech editing and denoising: Voicebox excels at generating speech to seamlessly edit segments within audio recordings thanks to its in-context learning capabilities. Without having to rerecord the full speech, it may restore misspelled words or reconstruct the section of speech that was damaged by short-duration noise. The raw voice portion that is distorted by noise (such as a dog barking) might be identified, cropped, and the model could be told to recreate that piece. One day, this feature may be leveraged to make audio cleanup and editing as simple as modifying photographs using well-known image-processing programs.

Diverse voice sampling: Voicebox can produce speech that is more like how people actually speak in the six languages mentioned above after learning from a variety of in-the-wild data. In the future, a speech assistant model could be trained more effectively by using this capability to produce synthetic data. According to our findings, voice recognition models trained on synthetic speech produced by Voicebox perform nearly as well as models trained on real speech, degrading their mistake rates by only 1% as opposed to earlier text-to-speech models’ degradation rates of between 45-70%.